How Node.js Handles Multiple Requests with a Single Thread

When developers first hear that Node.js is single-threaded, the first reaction is usually confusion.

“If there’s only one thread, how can Node.js handle thousands of users at the same time?”

Traditional backend servers often create a separate thread for every incoming request. More users means more threads. More threads means more memory usage and more CPU overhead.

Node.js takes a completely different approach.

Instead of creating a thread for every request, Node.js follows a much smarter idea:

Don’t wait. Delegate the work and keep moving.

That simple principle is what makes Node.js extremely fast and scalable for modern web applications.

In this article, we’ll understand how Node.js handles multiple requests using a single thread, how the event loop works, and why this architecture performs so well.

Single Threaded Nature of Node.js

Before understanding Node.js, let’s simplify two important terms process and thread :

Process vs Thread

A process is a running application.

For example:

Chrome browser = one process

VS Code = another process

Your Node.js app = another process

A thread is a worker inside that process.

Think of a restaurant kitchen:

Kitchen = process

Chefs = threads

Many traditional servers use multiple chef threads to handle multiple requests simultaneously but Node.js works differently.

Node.js Uses a Single Main Thread

Node.js runs JavaScript on:

one main thread

one call stack

one memory heap

This means only one piece of JavaScript executes at a time.

At first, this sounds like a limitation.

But most backend applications spend the majority of their time:

waiting for databases

waiting for APIs

waiting for file systems

waiting for network responses

These are called I/O operations.

Instead of sitting idle and waiting for these operations to finish, Node.js delegates them and keeps handling other requests.

That’s the foundation of Node.js architecture.

Event Loop Role in Concurrency

The real power of Node.js comes from the Event Loop.

The event loop is a continuously running mechanism that checks:

Is there a new task?

Is any async task completed?

Is there a callback waiting to execute?

It keeps repeating this cycle extremely fast.

The Chef Analogy

Imagine a restaurant with only one chef.

A blocking system would work like this:

Take Order #1

Cook it completely

Deliver it

Then take Order #2

Every customer waits.

How Node.js Works

Node.js behaves like a smart chef.

Takes Order #1

Puts steak on the grill

Sets a timer

Immediately starts Order #2

Then Order #3

The chef doesn’t stand there watching the steak cook.

Instead:

the grill handles cooking

timers notify when food is ready

the chef keeps serving customers

That chef is the Event Loop.

This is how Node.js handles many requests concurrently without blocking the main thread.

Concurrency vs Parallelism

This is one of the most misunderstood concepts.

Concurrency :

Concurrency means:

Multiple tasks making progress without blocking each other.

Node.js is primarily concurrent.

Parallelism :

Parallelism means:

Multiple tasks literally running at the same exact time on multiple CPU cores.

Node.js does not execute JavaScript in parallel by default.

Instead, it efficiently switches between tasks using the event loop.

Delegating Tasks to Background Workers

Here’s something many beginners don’t realize:

Node.js is single-threaded for JavaScript execution, but not everything runs on that single thread.

Under the hood, Node.js uses:

libuv

libuv provides:

the event loop

async I/O handling

thread pool support

What Gets Delegated?

Certain operations are too slow to execute directly on the main thread.

Examples include:

file system operations

DNS lookups

cryptography

compression

These tasks are delegated to background worker threads.

Meanwhile, the main thread remains free to handle incoming requests.

For network operations like HTTP requests and database communication, Node.js often relies directly on the operating system’s asynchronous I/O system.

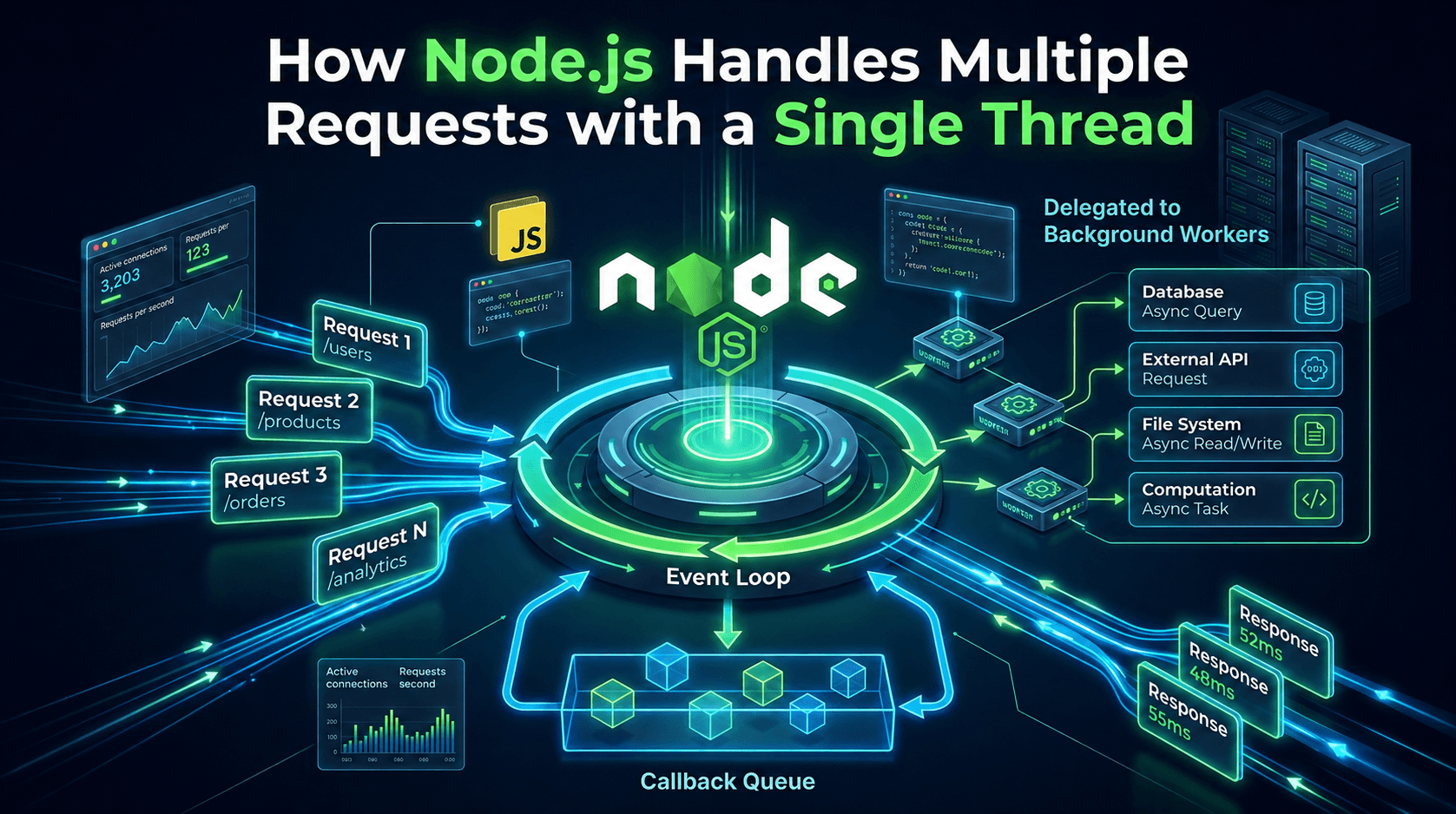

Visual Flow

Client Request

↓

Main Thread Receives Request

↓

Async Task Delegated

↓

Background Worker / OS

↓

Task Completes

↓

Callback Queue

↓

Event Loop Executes Callback

↓

Response Sent

Handling Multiple Client Requests

Let’s say three users hit your API at the same time.

app.get("/", async (req, res) => {

const users = await fetchUsers();

res.json(users);

});

Here’s what happens internally.Here’s what happens internally.

Step 1: Request Arrives

User A sends a request.

The main thread receives it.

Step 2: Async Task Starts

The database query is delegated immediately.

The main thread does not wait.

Step 3: Event Loop Continues

While User A’s database query runs in the background:

User B request arrives

User C request arrives

Node.js accepts both immediately.

Step 4: Completed Tasks Return

When database results are ready:

callbacks enter the queue

event loop picks them up

responses are sent

This creates the illusion that many things are happening simultaneously.

But internally:

JavaScript still runs on one thread

Node.js simply avoids blocking it

Blocking vs Non-Blocking Example

Blocking Code :

const data = fs.readFileSync("largeFile.txt");

console.log(data);

Problem:

execution stops

event loop waits

other requests cannot be processed

Non-Blocking Code :

fs.readFile("largeFile.txt", (err, data) => {

console.log(data);

});

Here:

file reading happens in background

event loop continues running

other users can still be served

This non-blocking behavior is the reason Node.js performs so efficiently.

Why Node.js Scales Well

Node.js performs exceptionally well for I/O-heavy applications because of its lightweight architecture.

Low Memory Usage

Traditional servers create one thread per request.

More threads mean:

more RAM usage

more CPU scheduling

more overhead

Node.js avoids this by using a single event loop.

Thousands of connections can remain open with relatively low memory usage.

Efficient Handling of I/O Operations

Most backend applications spend more time waiting than computing.

For example:

waiting for databases

waiting for APIs

waiting for file reads

Node.js uses this waiting time efficiently by continuing to serve other users.

Minimal Context Switching

In multi-threaded systems, the operating system constantly switches between threads.

This process is called context switching, and it adds overhead.

Since Node.js mainly uses one thread for JavaScript execution, it avoids most of that overhead.

Best for Real-Time Applications

Node.js works especially well for:

APIs

chat applications

streaming platforms

real-time dashboards

multiplayer games

Anywhere many users stay connected simultaneously, Node.js performs extremely well.

Conclusion

The biggest misconception about Node.js is:

“Single-threaded means it can only handle one request at a time.”

That’s not true.

Node.js handles thousands of concurrent requests efficiently because it never wastes time waiting for slow operations to complete.

Instead:

async tasks run in the background

the event loop keeps moving

completed operations return through callbacks

The result is a lightweight, fast, and highly scalable backend architecture.

Once you understand the event loop and non-blocking I/O, the magic behind Node.js starts making perfect sense.